AI Examples

AI for Community Newsletters

Professional Services

The Prompt delivers interactive, engaging AI trainings and advisory services that make AI practical, human-centered, and actionable for leaders and teams.

Erika Kosina uses AI to provide efficient and cost-effective content marketing planning, story-driven content creation, and campaign measurement & recommendations for nonprofits.

NonprophetAI provides consulting services and a Capacity Command Center product for nonprofits looking to leverage AI to lighten staff load while keeping humans in the loop.

Books & Articles

- One Useful Thing: Ethan Mollick’s series of very good articles, on Substack

- Co-Intelligence. Ethan Mollick book on our relationship with AI.

- The Alignment Problem. Great book on challenges with AI (bias, incorrect goals, etc)

- Will the Humanities Survive AI?. New Yorker article (might be behind paywall). For example “But to be human is not to have answers. It is to have questions—and to live with them. The machines can’t do that for us. Not now, not ever.”

- Machines of Loving Grace. Dario Amodei (co-founder and CEO of Anthropic) writes about the potential benefits to what he terms “powerful AI”. The term Powerful AI is interesting – he’s trying to stay out of the discussion of AGI (Artificial General Intelligence), and rather focus on what happens when everyone has access to AI that can, in many/most areas, out-perform a human expert.

- Situational Awareness. Series of articles on what could happen with AI, over the next few years. Good reading if you want bad sleeping.

- I’m a Therapist. ChatGPT Is Eerily Effective. New York Times essay (might be behind paywall) by a very experienced therapist about his experience using ChatGPT.

- Life on Claude Nine. An short story, written from the perspective of a coder using Claude, on what might go wrong. It’s not what I’d call “hard sci-fi”, in that it’s hard to imagine the big step of Claude both becoming actually sentient and being able to take control of infrastructure (I guess PG&E’s archaic computer systems could be seen as defense against an AI power-grab).

- The Adolescence of Technology. This is a long, thoughtful discussion of AI risks by Dario Amodei, co-founder and CEO of Anthropic (Claude). It’s worth reading, if this is something you care about. And yes, he obviously has a dog in this hunt but he also is one of the most thoughtful and well-informed writer on the risks of AI.

Environmental Issues

Below are links to articles that I think are worth reading, when thinking about the impact that AI has on our environment.

- The AI Water Issue is Fake. I heard Andy Masley interviewed on Hard Fork, and read his article to dig deeper. He provides good analysis of actual water usage, which is very, very small when compared to almost everything else (like golf courses, or a hamburger). I did the math, and skipping one hamburger would save enough water for more than 7 million simple prompts.

Policies

A few organizations have published their guidelines for AI usage, which can be a useful starting point for creating your own policy.

- AI for Community Guidelines. This isn’t called an AI Policy for a reason, as it’s clear (to us, at least) that the only useful approach to helping everyone use AI both effectively and responsibly is to help everyone understand what are the hard and fast rules, versus cautions, versus recommendations, and the why behind everything.

- The Responsible AI Manifesto. A blog post by The Marketing Artificial Intelligence Institute. The “Our Responsible AI Principles” section lists 12 principles, including the one that I emphasize in training: “We believe that humans remain accountable for all decisions and actions, even when assisted by AI. The human must remain in the loop in all AI applications.”

- Fast Forward’s AI Policy. This is a pretty good starting point.

- Candid’s Responsible AI use policy blog post.

- California Courts Model Policy. The basic requirements for every California court’s AI policy (which can be different, court-by-court). A court can prohibit AI, but if they allow it then their policy must cover (a) not uploading confidential information, (b) prohibiting the use of AI to discriminate, (c) require court personnel to take reasonable efforts to verify AI output and correct mistakes, (d) require court personnel to take reasonable efforts to remove biased AI output, (e) label all output that is entirely generated by AI as being such, and (f) comply with all applicable laws and policies relating to AI.

- Nonprofit Policy Builder. Fast Forward has an interactive web site (powered by chatbase, which is an AI Chatbot tool) where you can answer questions about your nonprofit, and get a customized policy. I’ve tried it, and it did work – here’s the policy it created. But there were a LOT of questions, and the policy still felt pretty generic.

In addition, a local supporter (Gretchen Bond) sent me this text, which she attached to a board report she’d worked on using AI. I think it’s a great example of being clear about how AI was used, and also owning responsibility for the final product:

In the spirit of transparency, portions of this report were refined with the help of AI tools to enhance clarity and structure. The insights and recommendations remain my own and reflect my review, guidance, and professional judgment.

AI Skeptics

This is a collection of links to content where the point-of-view is strongly opposed to AI. I find that reading these is helpful in assessing the pros and cons of this amazing and scary new technology.

- Ed Zitron’s Where’s Your Ed At

- Cassie Willson’s Facebook Rant (turn on sound by clicking tiny speaker icon at top of video)

- MIT Tech Review’s article: We did the math on AI’s energy usage. Normally I really like what Tech Review publishes, but this article was verging on journalistic innumeracy.

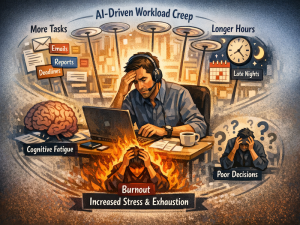

- Your Brain on ChatGPT. Published results of a study analyzing the impact of relying too much on ChatGPT to think for you.

- AI: What Could Go Wrong?. Jon Stewart interviews Geoffrey Hinton, aka the “Godfather of AI”. They talk about how AI actually works, in a very approachable way, and then dive into potential threats posed by AI.

- AI Policy. Amanda Gailey is an associate professor of English at the University of Nebraska–Lincoln. She’s written a document titled “AI Policy”, but it’s not actually policy – it’s her personal view of why using AI is a horrible idea.

Fun Stuff

A few things for your entertainment…

- PromptLibs. A web page created by my friend Matt Strain, where you can build a prompt by picking options from lists. Wait, isn’t he in marketing? How did he create an interactive web page? Vibe coding (via Lovable) for the win!

- When you use ChatGPT for everything. A humorous take on people becoming overly dependent on AI.

- Gemini songwriting is…not great. A friend who is testing Gemini’s new support for creating songs made this one for me. I think the prompt was to create a song that would inspire me to write more donation appeals (which is the hardest thing I have to do). If you give it a listen, I think you’ll understand why I think Gemini songwriting has areas for improvement. Though the last promo whisper at the end of the song has me questioning whether I’ve been saying “Gemini” wrong all these years. Maybe it’s actually “Gem in I”.